71% of the top 100 Spotify artists in the UK are "self-taught", but is that really so surprising?

Stats are indicative of the fact that you no longer need to be able play an instrument to make music

Stats are indicative of the fact that you no longer need to be able play an instrument to make music

The Baron of Techno on making his seminal album, how he approaches remixes and why ‘90s sounds are back in fashion

"You could be the greatest guitar player in the world but a ten-year-old could learn to play that in an hour", he says of the "magic" melodic moments he loves to discover in songwriting

It sounds like Taylor’s been raiding the ‘80s synth department

Betts wrote some of the Allman Brothers' biggest hits, including Ramblin’ Man, influencing a generation of players with a sound that threw all kinds of styles into the pot and made it work

Entry-level devices for rekordbox, Serato, Traktor and more - perfect for beginner DJs

It’s the guitar feelgood story of the year as the Creed/Alter Bridge guitarist is reunited with “prized possession” after his superhero manager Tim Tournier tracked it down for his 50th birthday

Designed to encourage adventurous sonic exploration, MYTH uses machine learning to resynthesize samples into complex oscillators and transform their timbre

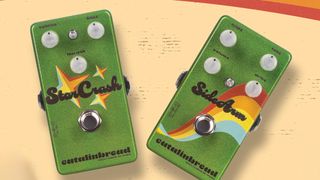

Welcome to the '70s, again, with the StarCrash offering a bias-equipped take on a silicon Fuzz Face and the SideArm a more tweakable version of that lil' green overdrive

And a fine Thumpin' Thursday to you, too, as Boss effectively delivers an full pro-quality digital rig for your consideration

Make with the funky drumming in this speedy Live 12 tutorial

Want to avoid replacing your studio headphones sooner than you need to? We’ve got a range of tips to keep them in prime condition for longer, from the studio to the road

Including delta blues, gypsy jazz, country, medieval, folk and bluegrass

Head like a… E5addb9

Set your reverb pedals to stun

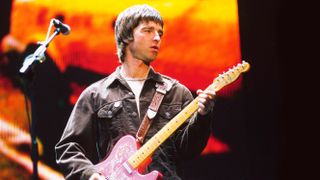

I'm feeling supersonic, give me F#m11/C#

The essential tools for a blues jam are all here – complete with a backing track to try them out on

This affordable multi-effects pedal looks to tempt beginner guitar players away from the practice amp with loads of functionality and sounds

This all-in-one room correction package means no more excuses for a muddy low-end

It’s designed for snare drums. It’s a microphone. It’s the Lauten Audio Snare Mic

The Swedish developer launches a sample-based drum machine. We give it a shot

A feature-packed, great value-for-money audio interface that could be the perfect companion for live shows and studio work

A solid and affordable off-the-shelf monitoring option

Headphones designed for both recording and mixing? We're intrigued

Well designed coaxial monitors can offer substantial sonic benefits. We hook one up